Training StyleGAN3 on Anime Faces

Image generation has evolved tremendously over the last couple of years. Generative Adversarial Networks have emerged as state-of-the-art in the field of image generation, the latest iteration of which is StyleGAN3.

What is StyleGAN3?

GAN is an acronym for "generative adversarial network" – a machine learning framework where two neural networks are set against each other, represented as G, the generator and D, the discriminator. The generator's job is to generate fake images to trick the discriminator, and the discriminator's job is to determine whether an image is real or fake.

The cutting-edge StyleGAN3 algorithm is the latest development in a long line of advancements in GANs. This algorithm can generate incredibly realistic images and has been used by researchers to generate everything from faces to 3D objects.

While the potential applications of StyleGAN3 are vast, one of the most exciting is its potential for creating realistic images.

In the past, GANs have had difficulty generating high-resolution images without introducing artifacts. Artifacts arise when the generator network upsamples low-resolution photos in a process known as aliasing. StyleGAN3 uses a novel upsampling method that doesn't introduce these artifacts, allowing it to generate high-resolution images without aliasing.

This is a significant improvement over previous generations of GANs, and it opens up a whole new range of possibilities for image generation. For example, StyleGAN3 could be used to generate realistic faces for use in video games or movies.

In addition to its ability to generate high-resolution images, StyleGAN3 is much faster than previous generations of GANs. This is because it uses a training method called "progressive growing" that allows the StyleGAN3 network to be trained using lower resolution images initially, with more detail being gradually added later as the training progresses. This is much faster than training a GAN on high-resolution images from scratch, which can take days or weeks.

StyleGAN3 is an exciting new development in the world of generative neural networks, and it has the potential to revolutionize the field of image generation.

Training StyleGAN3

I decided to train a fork of StyleGAN3 - “Vision-aided GAN”[3] on 4x A6000s generously provided by RunPod. Training StyleGAN3 requires at least 1 high-end GPU with 12GB of VRAM.

Vision-aided GAN leverages previously trained models to improve training quality significantly. This slightly increases training time; however, the results are typically much better.

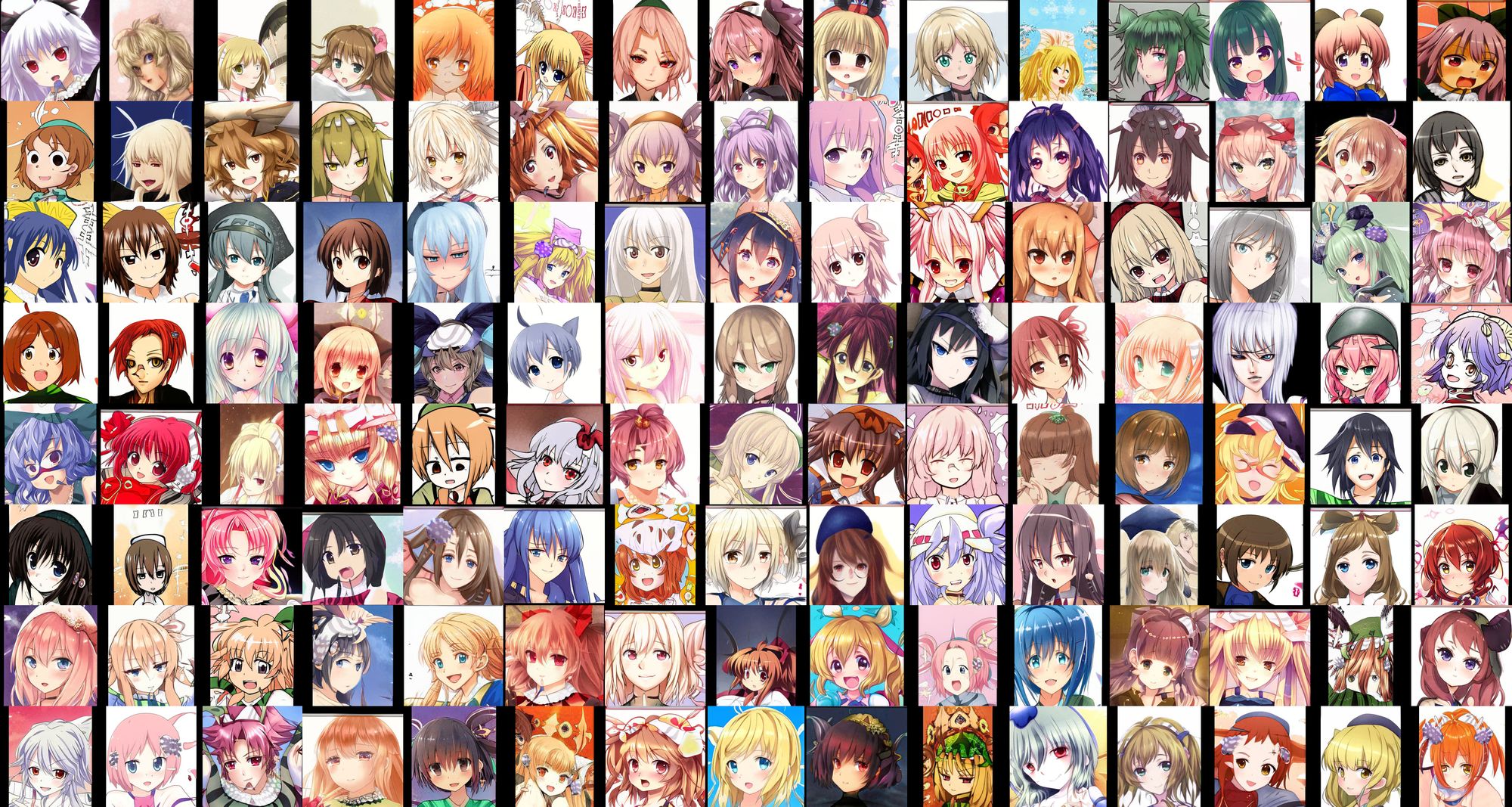

I am using the “danbooru2019 portraits”[4] subset of the danbooru2019 dataset to train (which contains approximately 300,000 images, though that volume of images is not strictly necessary). The parameters used were:

--cfg=stylegan3-t --gpus=4 --batch=32 --gamma=6 --dlr=0.001 --glr=0.001 --aug=noaug --snap=25and, for vision-aided GAN:

--cv=input-clip+dino+vgg+face_normals+face_seg-output-conv_multi_level+conv_multi_level+conv+conv+conv--cv-loss=multilevel_sigmoid_s+multilevel_sigmoid_s+sigmoid_s+sigmoid_s+sigmoid_s--warmup=0Refer to the training configurations to choose the correct values for your hardware, config and dataset. Reducing the --batch value typically warrants increasing --gamma and/or lowering the D and G learning rates (--dlr and --glr). Think of --gamma as the “randomness” or “creativity” of the model.

At about 900kimg into training, I introduced the vision-aided GAN parameters and switched to their version of StyleGAN3. After about a week of training, the model reached 11100kimg, At this point, training was terminated.

1000kimg

2000kimg

8000kimg

11000kimg

There are noticeable artifacts that seem to happen with StyleGAN3. However, the older StyleGAN2-ADA does not seem to have these artifacts. You can find the model download and more information here.

Artifacts

Closing Thoughts

StyleGAN3 is an exciting new algorithm that will revolutionize the field of image generation. It can generate high-resolution images and is much faster than previous generations of GANs. I believe that StyleGAN3 will have a significant impact on the field of image generation and I am looking forward to seeing how it continues to develop in the future, and what new exciting applications are built upon it.

References

- Alias-Free Generative Adversarial Networks (StyleGAN3) https://github.com/NVlabs/stylegan3

- Progressive Growing of GANs for Improved Quality, Stability, and Variation arXiv:1710.10196 [cs.NE]

- Vision-aided GAN https://github.com/nupurkmr9/vision-aided-gan

- Gwern Branwen, Anonymous, & The Danbooru Community; “Danbooru2019 Portraits: A Large-Scale Anime Head Illustration Dataset”, 2019-03-12. Web. Accessed 2022-06-19 https://www.gwern.net/Crops#danbooru2019-portraits

Guest authored by Exploding-cat (08-02-2022)