RunPod's Latest Innovation: Dockerless CLI for Streamlined AI Development

Discover the future of AI development with RunPod's Dockerless CLI tool. Experience seamless deployment, enhanced performance, and intuitive design, revolutionizing how you bring AI projects from concept to reality.

Revolutionizing AI Development with Dockerless CLI

RunPod is excited to announce a significant update to our Command Line Interface (CLI) tool, focusing on Dockerless functionality. This revolutionary feature simplifies the AI development process, allowing you to deploy custom endpoints on our serverless platform without the complexities of Docker.

Why Did We Make This?

RunPod has always embraced a Bring-Your-Own-Container model for our GPU Cloud and Serverless offerings. While this flexibility is a strength, it presented hurdles in the development process for our serverless endpoints. To address these, we're introducing RunPod Projects and a new Dockerless Workflow in release 1.11.0 of our CLI tool runpodctl. This approach simplifies project development and deployment, bypassing the need for Docker.

How Can I Use It?

To explore this workflow, ensure you have runpodctl version 1.11.0 or higher. The process involves configuring runpodctl with your API key, creating a new project, starting a development session, and deploying your serverless endpoint – all without the need for Docker.

How Does It Work?

The Dockerless workflow separates the components of a serverless worker, allowing for independent modification of system dependencies, custom code, code dependencies, and models. This setup streamlines the process of making and deploying changes.

Newly created projects have a specific file structure, and during development, any changes in the local project folder are synced to the project environment on RunPod. The runpod.toml file in your project directory allows you to configure various settings and paths for your project.

Key Features of Our Updated CLI Tool

We've listened to your feedback and focused our efforts on enhancing the user experience with our CLI tool. Here's what you can expect:

- Dockerless Deployment: Say goodbye to the hassles of building and pushing Docker images. Our CLI tool now enables direct deployment, making your workflow faster and more streamlined.

- Seamless Integration: Enjoy a smoother transition from development to production with our intuitive and user-friendly CLI commands.

- Enhanced Performance: Experience the efficiency of deploying AI applications with minimized delays and optimized resource usage.

- Community-Driven Development: We've incorporated your feedback into this update, ensuring our tools align with your needs and preferences.

Experience the Difference Yourself: In-Blog Tutorial

Embarking on your Dockerless development journey with RunPod is straightforward. Here's a quick guide to get you started with our innovative CLI tool, runpodctl, and embrace a streamlined AI development process.

Step 1: Configuration

Before diving into project creation, ensure runpodctl is installed and up to date (version 1.11.0 or higher). Begin by configuring runpodctl with your RunPod API key:

runpodctl config --apiKey="YOUR RUNPOD API KEY"

Step 2: Create Your Project

Create a new project, test-project, by executing the following command. This step will prompt you for project settings and automatically navigate you into the project folder:

runpodctl project create -n test-project && cd test-project

Open the project folder in a text editor to view the generated files, which include:

.runpodignorefor specifying files to exclude during deployment.builder/requirements.txtfor listing pip dependencies.runpod.tomlfor project config, containing deployment settings.src/handler.pyfor your handler source code.

Step 3: Start a Development Session

Initiate a development session on RunPod with:

runpodctl project dev

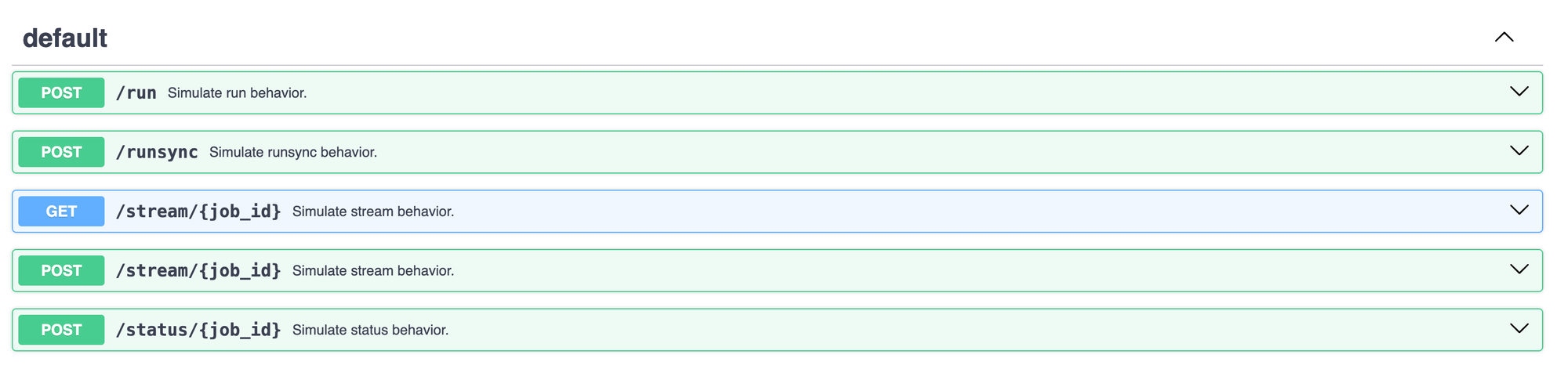

Select or create a network volume if it's your first session for this project. A pod will be created, and you'll see logs in your terminal. Once dependencies are set up, a URL for the testing API page will be provided. Visit this URL to send requests to the handler and observe real-time code changes.

Step 4: Deploy Your Serverless Endpoint

Once satisfied with your code, deploy it as a serverless endpoint:

runpodctl project deploy

Congratulations! You've successfully deployed a RunPod serverless endpoint without the complexities of Docker.

Understanding the Workflow

The Dockerless workflow simplifies the development process by separating the components of a serverless worker, allowing for quick modifications. This method utilizes a base Docker image filled with common system dependencies, alongside your custom code and any necessary supporting packages or models.

Frequently Asked Questions

- Why a network volume? It enhances the development experience by speeding up subsequent sessions and providing a faster method for workers to access dependencies and code at startup.

- Custom Docker image needs? The default is RunPod's base image, but you can specify another by updating

base_imageinrunpod.toml. - Targeting a Docker image in production? While the Dockerless workflow offers rapid development, targeting a Docker container for production can optimize cold start times. The

runpodctl project buildcommand generates a Dockerfile for this purpose.

Embark on your Dockerless development journey with RunPod and streamline your AI project workflows today.

Join the Conversation

We're keen to hear your feedback on our Dockerless CLI tool. Please head to our Discord server, create a post in our #feedback channel, and use the tag Dockerless. Your insights are invaluable to our continuous innovation, helping us refine and enhance our tools and services to better meet your needs in AI development.

With your collaboration, we're not just developing tools; we're shaping the future of AI. Explore the new possibilities with RunPod and be a part of this exciting journey.

Have you tried the new Dockerless CLI tool? What has been your experience? Share your thoughts and join the conversation.